Where is the latency hiding in your infrastructure?

And why Nirvana rebuilt the full cloud stack

Latency is often discussed across different parts of infrastructure, making it unclear where delays actually occur. In reality, it rarely comes from a single component but accumulates across the entire stack—from the moment a request leaves your application to when the response returns. To illustrate this, we break down where latency typically hides in modern workloads using a blockchain transaction as a simple example.

1. Application Access Layer (APIs, RPC, Queries)

Every system starts with an application request—API calls, database queries, AI inference, or RPC calls to a node. Many operations require multiple round trips before execution begins.

Blockchain is a clear example. A single transaction may trigger calls like eth_getBalance, eth_call, and eth_estimateGas. If each request takes ~50 ms, that’s already ~200 ms before the transaction is even sent.

This same pattern exists across modern distributed systems—multiple requests stack together, and latency compounds quickly.

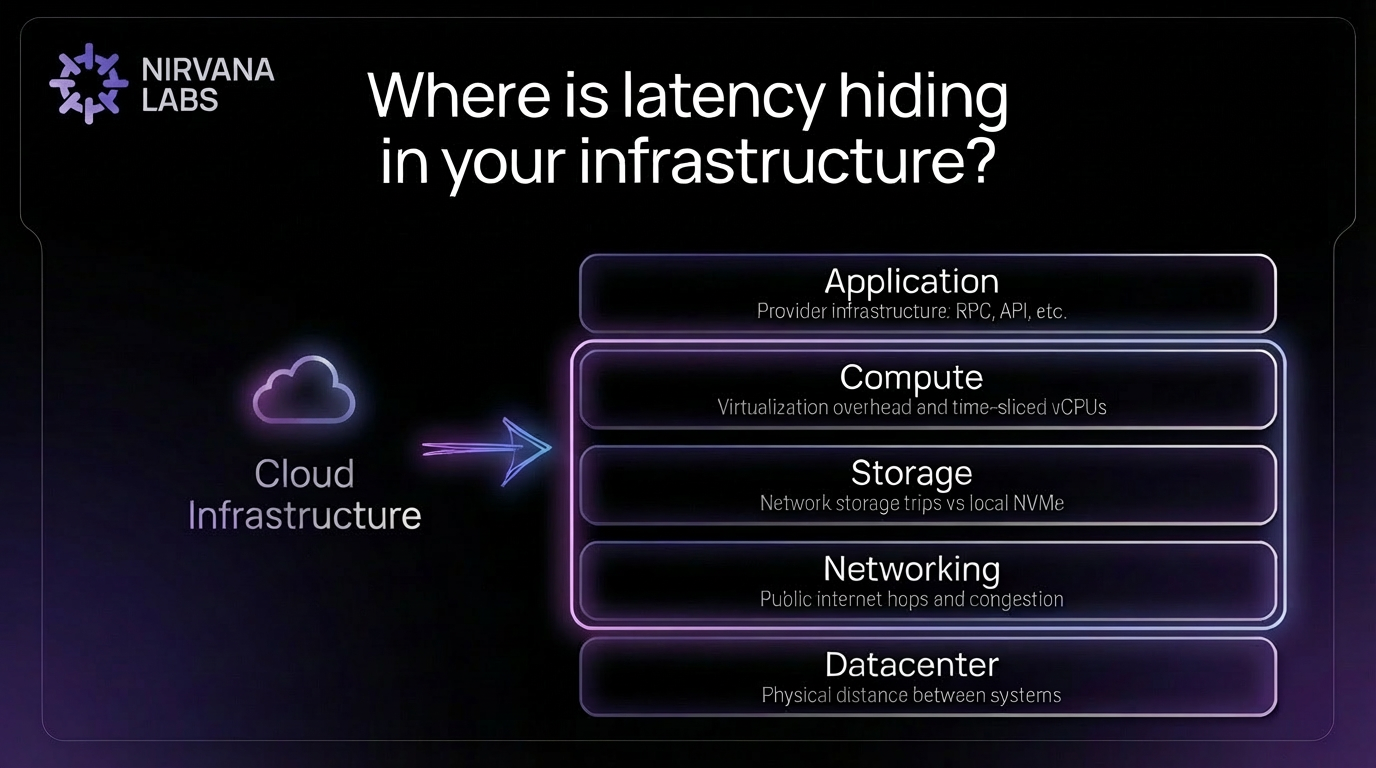

2. Cloud Infrastructure (Compute, Storage, Networking)

Once a request reaches your infrastructure, performance depends on three core layers: compute, storage, and networking. This is where much of the hidden latency in modern systems appears.

Compute, Hardware & Virtualization

- Virtualization Tax:

More VMs per physical server mean heavier virtualization and higher latency. The choice of hypervisor software itself adds an unavoidable delay. - Throttling:

Web3 requires high clock speeds (3.5GHz+). Standard cloud "vCPUs" are merely time-slices of a chip; if throttled, you finish verifying one block just as the next arrives, creating a permanent data "traffic jam".

Storage

- Local NVMe:

Offers the lowest latency but carries a high risk: if the physical disk fails, the data is gone. In Web3, where blocks arrive continuously, downtime is not an option. - Network-Attached Storage:

Decouples storage from compute. Your CPU might be in one rack while your disk is blocks away, making every state read a literal trip across a network that creates cumulative lag. - IOPS Ceiling:

Most hyperscalers cap your IOPS (Input/Output Operations Per Second). Once you exhaust your "burst credits," your speed drops unless you pay to unlock better performance.

Networking

- Public Internet:

For many teams, the public internet is the default and often the most practical option. However, it is a shared global network with multiple routing hops, variable congestion, and unpredictable queuing. During peak periods, packets may traverse suboptimal paths, introducing jitter and tail latency that directly impacts time-sensitive workloads. - Private Connectivity:

Private networking (such as direct cross-connects or private fiber) reduces routing variability and isolates traffic from broader internet congestion. By minimizing hops and avoiding shared bottlenecks, it improves latency consistency — especially important for RPC, trading, and validator workloads where tail latency matters.

3. Datacenter (Geography & Physical Distance)

Even with optimized infrastructure, physics still applies. The distance between datacenters determines how long data takes to travel. For example, requests moving between the U.S. and Japan can add 150–200 ms of round-trip latency.

For time-sensitive systems like trading engines, real-time APIs, or blockchain infrastructure, this geographic delay can significantly impact performance. Even when compute, storage, and networking are optimized, physical distance remains a fundamental limit.

The Total Latency Calculation

To understand true performance, you need to consider the friction added at every layer of the infrastructure stack:

Application + Compute + Storage + Networking + Datacenter

The sum of these layers determines your true end-to-end latency.

It’s also important to note that some sources of latency sit outside infrastructure control, such as blockchain consensus mechanisms or a user’s own internet connection. These factors are determined by protocol design and end-user networks rather than the underlying cloud infrastructure.

How Nirvana Rebuilds the Stack

Traditional clouds were designed for general-purpose workloads and often introduce layers of abstraction that add latency. Nirvana rebuilds the infrastructure stack from the metal up to remove these sources of friction.

Dedicated Compute

Workloads run on high clock-speed bare-metal hardware with predictable resources, avoiding heavy virtualization and noisy neighbors.

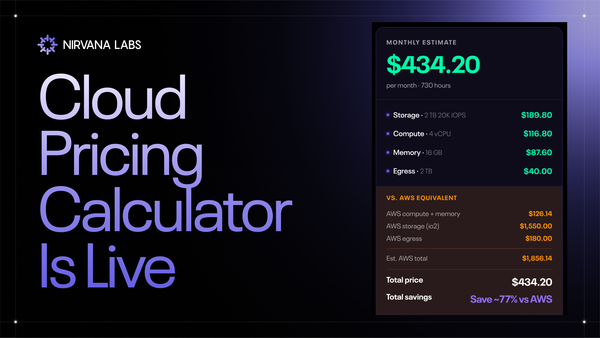

High-Performance Storage

Accelerated Block Storage (ABS) delivers 20K guaranteed baseline IOPS, up to 600K burst, and sub-ms latency, combining cloud flexibility with near-NVMe performance.

Integrated Networking

Compute, storage, and networking operate within the same high-performance datacenter fabric, reducing network hops for real-time workloads.

By rebuilding compute, storage, and networking together, Nirvana removes these layers so your latency reflects physics, not infrastructure overhead.

Interested in making your product faster?

Get in touch.

The Performance Cloud for Modern Workloads.

High Clock Speed Compute. Low latency Storage. Radically Cheaper Bandwidth.

Powering Web3, AI, and real-time systems.

Learn more at Nirvana Labs

Nirvana Cloud| Pricing | Blog | Docs | Twitter | Telegram | LinkedIn